Follow ZDNET: Add us as a preferred source on Google.

ZDNET’s key takeaways

- Anthropic updated its AI training policy.

- Users can now opt in to having their chats used for training.

- This deviates from Anthropic’s previous stance.

Anthropic has become a leading AI lab, with one of its biggest draws being its strict position on prioritizing consumer data privacy. From the onset of Claude, its chatbot, Anthropic took a stern stance about not using user data to train its models, deviating from a common industry practice. That’s now changing.

Users can now opt into having their data used to train the Anthropic models further, the company said in a blog post updating its consumer terms and privacy policy. The data collected is meant to help improve the models, making them safer and more intelligent, the company said in the post.

Also: Anthropic’s Claude Chrome browser extension rolls out – how to get early access

While this change does mark as a sharp pivot from the company’s typical approach, users will still have the option to keep their chats out of training. Keep reading to find out how.

Who does the change affect?

Before I get into how to turn it off, it is worth noting that not all plans are impacted. Commercial plans, including Claude for Work, Claude Gov, Claude for Education, and API usage, remain unchanged, even when accessed by third parties through cloud services like Amazon Bedrock and Google Cloud’s Vertex AI.

The updates apply to Claude Free, Pro, and Max plans, meaning that if you are an individual user, you will now be subject to the Updates to Consumer Terms and Policies and will be given the option to opt in or out of training.

How do you opt out?

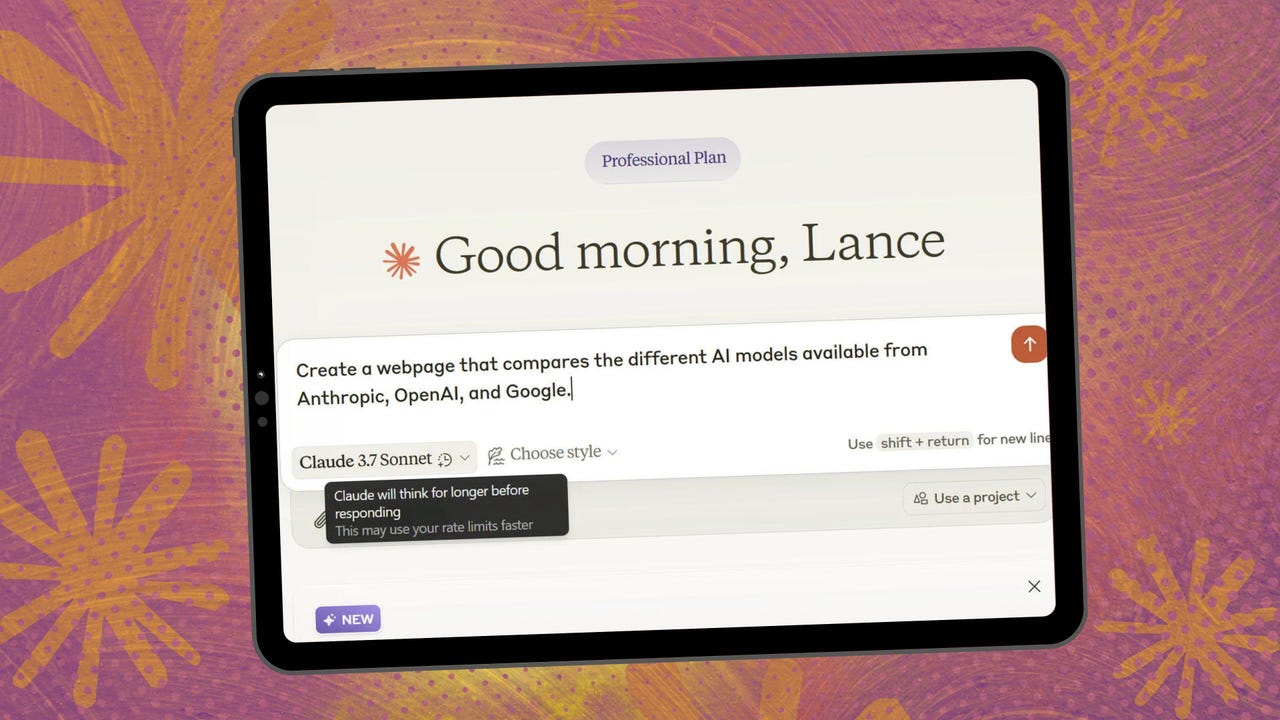

If you are an existing user, you will be shown a pop-up like the one shown below, asking you to opt in or out of having your chats and coding sessions trained to improve Anthropic AI models. When the pop-up comes up, make sure to actually read it because the bolded heading of the toggle isn’t straightforward — rather, it says “You can help improve Claude,” referring to the training feature. Anthropic does clarify underneath that in a bolded statement.

You have until Sept. 28 to make the selection, and once you do, it will automatically take effect on your account. If you choose to have your data trained on, Anthropic will only use new or resumed chats and coding sessions, not past ones. After Sept. 28, you will have to decide on the model training preferences to keep using Claude. The decision you make is always reversible via Privacy Settings at any time.

Also: OpenAI and Anthropic evaluated each others’ models – which ones came out on top

New users will have the option to select the preference as they sign up. As mentioned before, it is worth keeping a close look at the verbiage when signing up, as it is likely to be framed as whether you want to help improve the model or not, and could always be subject to change. While it is true that your data will be used to improve the model, it is worth highlighting that the training will be done by saving your data.

Data saved for five years

Another change to the Consumer Terms and Policies is that if you opt in to having your data used, the company will retain that data for five years. Anthropic justifies the longer time period as necessary to allow the company to make better model developments and safety improvements.

When you delete a conversation with Claude, Anthropic says it will not be used for model training. If you don’t opt in for model training, the company’s existing 30-day data retention period applies. Again, this doesn’t apply to Commercial Terms.

Anthropic also shared that users’ data won’t be sold to a third party, and that it uses tools to “filter or obfuscate sensitive data.”

Data is essential to how generative AI models are trained, and they only get smarter with additional data. As a result, companies are always vying for user data to improve their models. For example, Google just recently made a similar move, renaming the “Gemini Apps Activity” to “Keep Activity.” When the setting is toggled on, a sample of your uploads, starting on Sept. 2, the company says it will be used to “help improve Google services for everyone.”