For most of the past decade, AI governance lived comfortably outside the systems it was meant to regulate. Policies were written. Reviews were conducted. Models were approved. Audits happened after the fact. As long as AI behaved like a tool—producing predictions or recommendations on demand—that separation mostly worked. That assumption is breaking down.

As AI systems move from assistive components to autonomous actors, governance imposed from the outside no longer scales. The problem isn’t that organizations lack policies or oversight frameworks. It’s that those controls are detached from where decisions are actually formed. Increasingly, the only place governance can operate effectively is inside the AI application itself, at runtime, while decisions are being made. This isn’t a philosophical shift. It’s an architectural one.

When AI Fails Quietly

One of the more unsettling aspects of autonomous AI systems is that their most consequential failures rarely look like failures at all. Nothing crashes. Latency stays within bounds. Logs look clean. The system behaves coherently—just not correctly. An agent escalates a workflow that should have been contained. A recommendation drifts slowly away from policy intent. A tool is invoked in a context that no one explicitly approved, yet no explicit rule was violated.

These failures are hard to detect because they emerge from behavior, not bugs. Traditional governance mechanisms don’t help much here. Predeployment reviews assume decision paths can be anticipated in advance. Static policies assume behavior is predictable. Post hoc audits assume intent can be reconstructed from outputs. None of those assumptions holds once systems reason dynamically, retrieve context opportunistically, and act continuously. At that point, governance isn’t missing—it’s simply in the wrong place.

The Scaling Problem No One Owns

Most organizations already feel this tension, even if they don’t describe it in architectural terms. Security teams tighten access controls. Compliance teams expand review checklists. Platform teams add more logging and dashboards. Product teams add additional prompt constraints. Each layer helps a little. None of them addresses the underlying issue.

What’s really happening is that governance responsibility is being fragmented across teams that don’t own system behavior end-to-end. No single layer can explain why the system acted—only that it acted. As autonomy increases, the gap between intent and execution widens, and accountability becomes diffuse. This is a classic scaling problem. And like many scaling problems before it, the solution isn’t more rules. It’s a different system architecture.

A Familiar Pattern from Infrastructure History

We’ve seen this before. In early networking systems, control logic was tightly coupled to packet handling. As networks grew, this became unmanageable. Separating the control plane from the data plane allowed policy to evolve independently of traffic and made failures diagnosable rather than mysterious.

Cloud platforms went through a similar transition. Resource scheduling, identity, quotas, and policy moved out of application code and into shared control systems. That separation is what made hyperscale cloud viable. Autonomous AI systems are approaching a comparable inflection point.

Right now, governance logic is scattered across prompts, application code, middleware, and organizational processes. None of those layers was designed to assert authority continuously while a system is reasoning and acting. What’s missing is a control plane for AI—not as a metaphor but as a real architectural boundary.

What “Governance Inside the System” Actually Means

When people hear “governance inside AI,” they often imagine stricter rules baked into prompts or more conservative model constraints. That’s not what this is about.

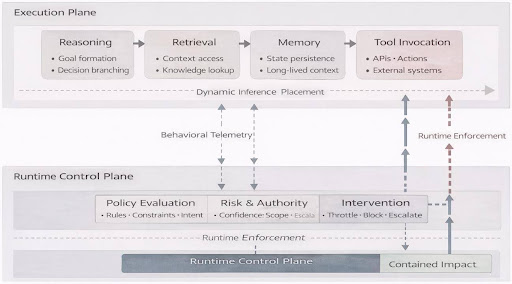

Embedding governance inside the system means separating decision execution from decision authority. Execution includes inference, retrieval, memory updates, and tool invocation. Authority includes policy evaluation, risk assessment, permissioning, and intervention. In most AI applications today, those concerns are entangled—or worse, implicit.

A control-plane-based design makes that separation explicit. Execution proceeds but under continuous supervision. Decisions are observed as they form, not inferred after the fact. Constraints are evaluated dynamically, not assumed ahead of time. Governance stops being a checklist and starts behaving like infrastructure.

Reasoning, retrieval, memory, and tool invocation operate in the execution plane, while a runtime control plane continuously evaluates policy, risk, and authority—observing and intervening without being embedded in application logic.

Where Governance Breaks First

In practice, governance failures in autonomous AI systems tend to cluster around three surfaces.

Reasoning. Systems form intermediate goals, weigh options, and branch decisions internally. Without visibility into those pathways, teams can’t distinguish acceptable variance from systemic drift.

Retrieval. Autonomous systems pull in context opportunistically. That context may be outdated, inappropriate, or out of scope—and once it enters the reasoning process, it’s effectively invisible unless explicitly tracked.

Action. Tool use is where intent becomes impact. Systems increasingly invoke APIs, modify records, trigger workflows, or escalate issues without human review. Static authorization models don’t map cleanly onto dynamic decision contexts.

These surfaces are interconnected, but they fail independently. Treating governance as a single monolithic concern leads to brittle designs and false confidence.

Control Planes as Runtime Feedback Systems

A useful way to think about AI control planes is not as gatekeepers but as feedback systems. Signals flow continuously from execution into governance: confidence degradation, policy boundary crossings, retrieval drift, and action escalation patterns. Those signals are evaluated in real time, not weeks later during audits. Responses flow back: throttling, intervention, escalation, or constraint adjustment.

This is fundamentally different from monitoring outputs. Output monitoring tells you what happened. Control plane telemetry tells you why it was allowed to happen. That distinction matters when systems operate continuously, and consequences compound over time.

Behavioral telemetry flows from execution into the control plane, where policy and risk are evaluated continuously. Enforcement and intervention feed back into execution before failures become irreversible.

Want Radar delivered straight to your inbox? Join us on Substack. Sign up here.

A Failure Story That Should Sound Familiar

Consider a customer-support agent operating across billing, policy, and CRM systems.

Over several months, policy documents are updated. Some are reindexed quickly. Others lag. The agent continues to retrieve context and reason coherently, but its decisions increasingly reflect outdated rules. No single action violates policy outright. Metrics remain stable. Customer satisfaction erodes slowly.

Eventually, an audit flags noncompliant action. At that point, teams scramble. Logs show what the agent did but not why. They can’t reconstruct which documents influenced which decisions, when those documents were last updated, or why the agent believed its actions were valid at the time.

This isn’t a logging failure. It’s the absence of a governance feedback loop. A control plane wouldn’t prevent every mistake, but it would surface drift early—when intervention is still cheap.

Why External Governance Can’t Catch Up

It’s tempting to believe better tooling, stricter reviews, or more frequent audits will solve this problem. They won’t.

External governance operates on snapshots. Autonomous AI operates on streams. The mismatch is structural. By the time an external process observes a problem, the system has already moved on—often repeatedly. That doesn’t mean governance teams are failing. It means they’re being asked to regulate systems whose operating model has outgrown their tools. The only viable alternative is governance that runs at the same cadence as execution.

Authority, Not Just Observability

One subtle but important point: Control planes aren’t just about visibility. They’re about authority.

Observability without enforcement creates a false sense of safety. Seeing a problem after it occurs doesn’t prevent it from recurring. Control planes must be able to act—to pause, redirect, constrain, or escalate behavior in real time.

That raises uncomfortable questions. How much autonomy should systems retain? When should humans intervene? How much latency is acceptable for policy evaluation? There are no universal answers. But those trade-offs can only be managed if governance is designed as a first-class runtime concern, not an afterthought.

The Architectural Shift Ahead

The move from guardrails to control loops mirrors earlier transitions in infrastructure. Each time, the lesson was the same: Static rules don’t scale under dynamic behavior. Feedback does.

AI is entering that phase now. Governance won’t disappear. But it will change shape. It will move inside systems, operate continuously, and assert authority at runtime. Organizations that treat this as an architectural problem—not a compliance exercise—will adapt faster and fail more gracefully. Those who don’t will spend the next few years chasing incidents they can see, but never quite explain.

Closing Thought

Autonomous AI doesn’t require less governance. It requires governance that understands autonomy.

That means moving beyond policies as documents and audits as events. It means designing systems where authority is explicit, observable, and enforceable while decisions are being made. In other words, governance must become part of the system—not something applied to it.

Further Reading

- “AI Governance Frameworks for Responsible AI,” Gartner Peer Community, https://www.gartner.com/peer-community/oneminuteinsights/omi-ai-governance-frameworks-responsible-ai-33q.

- Lauren Kornutick et al., “Market Guide for AI Governance Platforms,” Gartner, November 4, 2025, https://www.gartner.com/en/documents/7145930.

- Svetlana Sicular, “AI’s Next Frontier Demands a New Approach to Ethics, Governance, and Compliance,” Gartner, November 10, 2025, https://www.gartner.com/en/articles/ai-ethics-governance-and-compliance.

- AI Risk Management Framework (AI RMF 1.0), NIST, January 2023, https://doi.org/10.6028/NIST.AI.100-1.