Most enterprise AI projects do not fail because of weak models. They fail because global teams cannot execute AI delivery at scale.

One team trains models in one geography. Another handles deployment somewhere else. Governance lives in disconnected spreadsheets. Sprint priorities change across time zones. Tooling is inconsistent. Ownership is fragmented. By the time the AI workload reaches production, velocity collapses under operational chaos. This is the real AI bottleneck nobody wants to talk about.

Enterprises are investing millions into AI transformation while still managing delivery through outdated offshore structures built for traditional software development. That model breaks fast when AI pipelines, MLOps, automation, governance, and real-time decision-making enter the picture. AI workloads demand operational alignment, not isolated execution. And that is exactly why GCC-backed pod models are becoming the new foundation for scalable AI delivery. Instead of fragmented outsourcing teams working in silos, GCC pods create shared ownership, standardized AI workflows, embedded governance, and continuous execution alignment across offshore and nearshore operations. The result is not just faster delivery.

It is predictable AI execution. Better governance. Cleaner handoffs. Stronger accountability. And AI systems that actually make it to production without burning through time, budget, and leadership patience. Because at scale, AI success is no longer a model problem. It is an operational governance problem.

Why Global AI Teams Struggle with Pipeline Governance

Most global AI teams are operating on disconnected execution models. Offshore AI development teams, nearshore engineering units, and in-house stakeholders often work with different priorities, tools, and delivery expectations. The result is fragmented AI pipeline governance where model development, testing, deployment, and monitoring lose alignment across the workflow. AI workloads slow down not because of technical limitations, but because operational coordination breaks under scale.

The second problem is inconsistent AI/ML workflows across distributed teams. One team may follow strict MLOps governance while another relies on manual deployment processes and undocumented changes. This creates version conflicts, poor model reproducibility, delayed sprint execution, and unstable production AI environments. Without standardized AI pipeline governance, enterprises end up scaling confusion instead of scaling intelligence.

Time-zone separation makes the problem worse. Offshore and nearshore AI teams often operate with weak shared context, reactive communication, and limited accountability structures. Critical decisions get delayed between handoffs. Sprint priorities drift. AI deployment issues remain unresolved longer than they should. Over time, the organization loses delivery predictability, governance visibility, and confidence in its ability to operationalize enterprise AI at scale.

What AI Pipeline Governance Actually Means

AI pipeline governance is not about adding more approvals, meetings, or process layers. It is about creating execution consistency across AI development, MLOps workflows, model deployment, data governance, and production AI operations. When global AI teams work across offshore and nearshore environments, governance becomes the system that standardizes how AI workloads are built, tested, monitored, secured, and scaled. Without a strong AI governance framework, enterprises end up with fragmented AI pipelines, inconsistent model behavior, deployment failures, and zero operational visibility.

Effective AI pipeline governance creates alignment across the entire AI lifecycle. That includes standardized AI/ML workflows, version control, automated testing, CI/CD for AI models, compliance tracking, sprint accountability, and shared delivery metrics across distributed AI engineering teams. In practical terms, governance ensures that every AI initiative follows the same operational discipline regardless of geography, team structure, or time zone. The companies scaling enterprise AI successfully are not the ones building the most models. They are the ones building predictable, governed, and repeatable AI delivery systems.

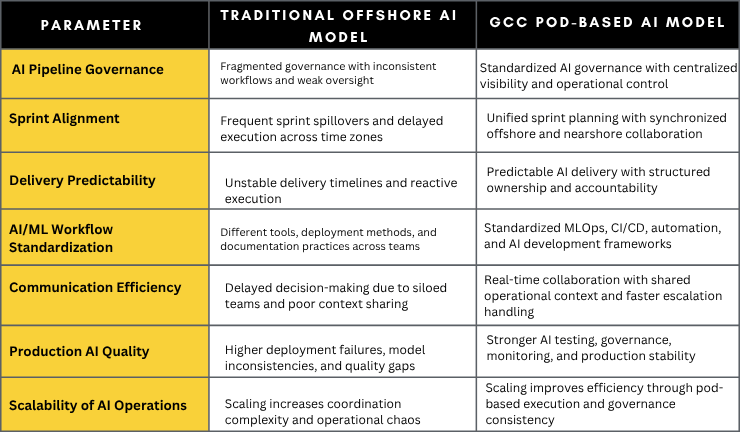

Top 7 Metrics to Evaluate Traditional Offshore AI Models vs GCC Pod Models

How GCC Pods Create Alignment Across Offshore and Nearshore AI Teams

Standardized Tooling

One of the biggest reasons global AI teams fail is workflow inconsistency. Different teams use different AI development environments, deployment methods, testing frameworks, and documentation standards. GCC pods eliminate this fragmentation by standardizing the entire AI technology stack across offshore and nearshore teams. From MLOps workflows and CI/CD pipelines to AI monitoring and version control, every team operates within the same execution framework.

Unified Sprint Planning

Traditional offshore AI teams often work on disconnected sprint cycles that create delays, rework, and delivery confusion. GCC pod models solve this by aligning sprint planning, delivery priorities, and execution timelines across all regions. Offshore and nearshore AI teams operate with shared roadmaps, synchronized goals, and continuous delivery visibility, which improves AI pipeline governance and accelerates production readiness.

Cross-Functional Collaboration

AI delivery cannot scale when data engineers, AI developers, DevOps teams, QA specialists, and business stakeholders operate in isolation. GCC pods bring cross-functional AI teams into a single operational structure with shared ownership and accountability. This reduces communication gaps, improves decision-making speed, and creates stronger alignment between AI strategy, engineering execution, and business outcomes.

Embedded Automation

Manual AI operations slow down enterprise scalability and increase production risk. GCC-backed AI delivery models integrate automation directly into AI pipelines through automated testing, CI/CD for AI models, deployment validation, monitoring, and workflow orchestration. Embedded automation reduces human dependency, improves execution consistency, and enables distributed AI teams to deliver faster without compromising governance or quality.

Real-Time Governance Visibility

Most enterprises struggle with AI governance because leadership lacks visibility into what is happening across distributed teams. GCC pod models create centralized governance visibility through shared dashboards, workflow tracking, compliance monitoring, sprint reporting, and AI performance analytics. Decision-makers gain real-time insight into delivery health, operational bottlenecks, deployment risks, and AI workload progress across offshore and nearshore operations.

The Operational Stack Behind Scalable AI Delivery

- MLOps Automation: Automates AI model training, deployment, retraining, and workflow orchestration to improve scalability, execution consistency, and operational efficiency across distributed AI teams.

- CI/CD for AI: Standardizes continuous integration and deployment pipelines for AI models, enabling faster releases, stable deployments, and predictable production delivery.

- Governance Checkpoints: Establishes validation controls across AI workflows, data usage, compliance, testing, and deployment to ensure secure and governed AI operations.

- Shared Observability: Creates centralized visibility into AI pipeline performance, system health, deployment status, and operational bottlenecks across offshore and nearshore teams.

- AI QA Automation: Automates AI testing, model validation, bias detection, and performance checks to reduce production failures and improve AI delivery quality.

- Version Control Discipline: Maintains consistent tracking of AI models, datasets, workflows, and code changes to eliminate conflicts and improve reproducibility.

- Documentation Systems: Centralizes operational knowledge, AI workflows, governance standards, and sprint decisions to improve collaboration and long-term scalability.

- AI Monitoring Workflows: Continuously monitors model accuracy, drift, system behavior, and AI performance to maintain production stability and governance compliance.

Signs Your AI Delivery Model Is Already Breaking

- Sprint spillovers are becoming routine, and AI workloads consistently miss production timelines.

- AI releases feel unpredictable because deployment quality, testing standards, and delivery processes vary across teams.

- Offshore and nearshore AI teams are using different workflows, tools, and documentation practices with little operational consistency.

- The same production bugs, model failures, and AI performance issues keep resurfacing across multiple release cycles.

- Offshore AI teams rely heavily on constant escalations, approvals, and intervention from internal leadership to keep execution moving.

- AI governance exists in presentations and policy documents, but not in actual day-to-day AI operations, workflows, or delivery accountability.

How ISHIR’s GCC Pods Help Enterprises Scale AI Delivery with Governance and Agility

ISHIR helps enterprises fix fragmented AI delivery through GCC-backed Agile Pods designed for scalable AI execution. Instead of disconnected offshore resources, ISHIR builds cross-functional AI pods aligned around shared ownership, standardized workflows, sprint accountability, and enterprise AI governance. This creates faster AI delivery, stronger operational visibility, and predictable execution across offshore and nearshore teams without the coordination chaos that slows most AI initiatives.

Through its Enterprise AI services, GCC-on-demand talent model, and Talent Accelerator framework, ISHIR enables organizations to scale AI engineering capabilities without compromising governance or delivery quality. From MLOps automation and AI pipeline governance to AI QA, deployment, and operational support, ISHIR creates AI delivery systems that are built for production scale, business continuity, and long-term enterprise growth.

AI projects do not fail from lack of innovation. They fail from broken execution across global teams.

FAQs

Q. Why do offshore AI projects fail during production deployment?

Most offshore AI projects fail because the delivery model is fragmented. Different teams use different AI workflows, deployment standards, and governance practices, which creates operational gaps during production scaling. Poor AI pipeline governance, weak sprint alignment, and inconsistent MLOps processes lead to delayed releases, model instability, and recurring production failures. Enterprises often discover too late that their AI strategy lacks execution consistency.

Q. What is AI pipeline governance and why is it critical for enterprise AI?

AI pipeline governance is the framework that standardizes how AI models are developed, tested, deployed, monitored, and managed across the enterprise AI lifecycle. It helps organizations maintain compliance, improve AI model reliability, reduce deployment risks, and create operational consistency across global AI teams. Without AI governance frameworks and MLOps discipline, enterprises struggle to scale AI beyond proof-of-concept environments.

Q. How do GCC pod models improve offshore and nearshore AI delivery?

GCC pod models replace siloed outsourcing structures with integrated AI delivery teams that operate with shared accountability, standardized workflows, and centralized governance visibility. Offshore and nearshore AI teams work within the same sprint cycles, AI development standards, and operational frameworks. This improves collaboration, reduces delivery friction, and creates predictable enterprise AI execution across regions.

Q. What are the biggest AI governance challenges for global enterprises?

The biggest AI governance challenges include inconsistent AI/ML workflows, fragmented tooling, weak documentation, model drift, lack of operational visibility, and poor coordination between distributed AI engineering teams. Many enterprises also struggle with AI compliance, deployment accountability, and real-time monitoring as AI workloads scale globally. Governance becomes significantly harder when offshore and nearshore teams operate without standardized AI delivery systems.

Q. How does MLOps help scale enterprise AI operations?

MLOps creates standardized workflows for AI model training, testing, deployment, monitoring, and retraining across the machine learning lifecycle. It combines automation, CI/CD for AI, governance controls, observability, and version management to improve AI scalability and production reliability. Enterprises using MLOps frameworks can reduce deployment delays, improve AI quality, and operationalize AI faster across distributed engineering teams.

Q. Why do AI initiatives struggle to move beyond proof of concept?

Most AI initiatives fail to scale because enterprises focus heavily on models while ignoring operational execution. AI pilots often succeed in controlled environments, but production AI requires governance, automation, monitoring, standardized workflows, and cross-functional collaboration. Without a scalable AI operating model, organizations face deployment bottlenecks, inconsistent delivery quality, and rising operational complexity.

Q. What is the difference between traditional AI outsourcing and GCC-based AI operations?

Traditional AI outsourcing focuses on resource augmentation and task-based execution, while GCC-based AI operations focus on integrated delivery ownership, governance, and long-term scalability. GCC pod models create centralized AI governance, shared sprint accountability, standardized MLOps workflows, and operational visibility across offshore and nearshore teams. This makes AI delivery faster, more predictable, and easier to scale at the enterprise level.

Q. How can enterprises improve AI delivery consistency across global teams?

Enterprises can improve AI delivery consistency by standardizing AI development workflows, implementing MLOps automation, centralizing governance visibility, and aligning offshore and nearshore teams through GCC pod structures. Strong AI pipeline governance ensures every team follows the same deployment standards, sprint planning process, monitoring workflows, and operational controls. Consistency in execution is what ultimately enables scalable enterprise AI delivery.